I still remember the first time I heard about Locally Hosted LLM Agents – I was waiting in line for my morning coffee, doodling digital art concepts on my tablet. A friend mentioned how these agents could revolutionize the way we create and interact with digital art, but the conversation was quickly bogged down by talk of complicated setups and expensive hardware. It seemed like just another example of how technological advancements can sometimes feel more like hurdles than opportunities. As someone who’s passionate about bringing digital art into everyday life, I believe it’s time to cut through the hype and explore the real potential of Locally Hosted LLM Agents.

As a digital art curator and consultant, I’ve had the chance to work with these agents firsthand, and I’m excited to share my honest, experience-based advice with you. In this article, I promise to provide a no-nonsense guide to getting started with Locally Hosted LLM Agents, focusing on the practical applications and creative possibilities that can enhance your digital art practice. I’ll draw from my own experiences, sharing stories of how these agents have helped me push the boundaries of digital art and make it more accessible to everyone. My goal is to inspire you to explore the vibrant world of digital art, and to show you how Locally Hosted LLM Agents can be a powerful tool in your creative journey.

Table of Contents

Unlocking Locally Hosted Llm Agents

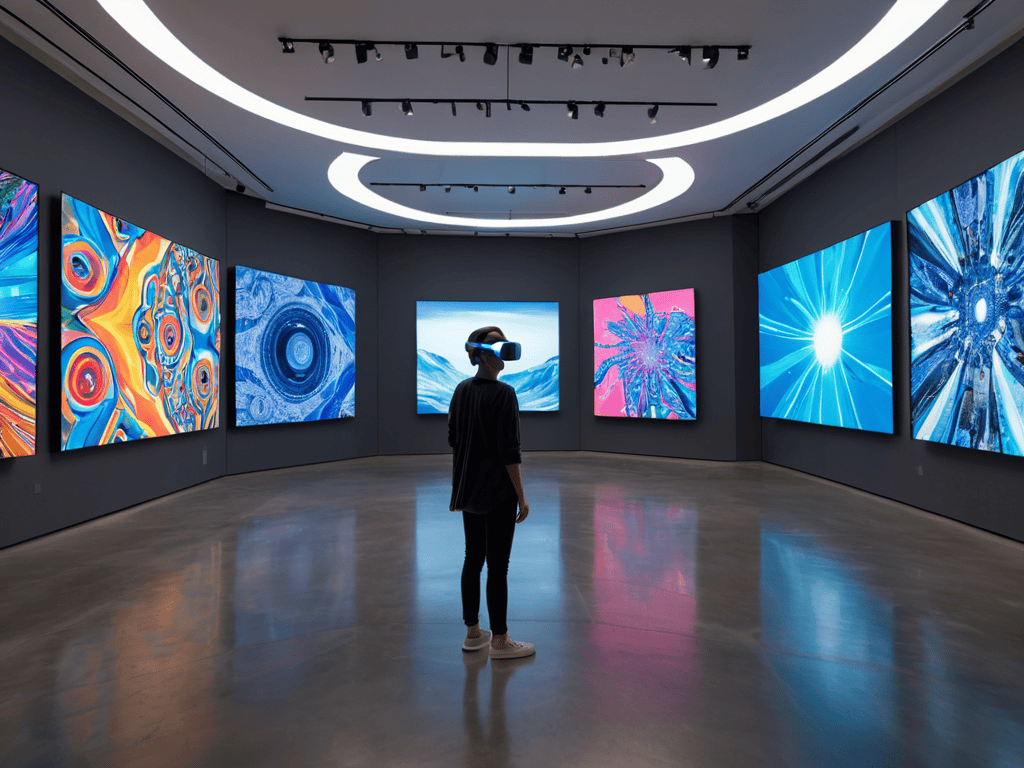

As I delve into the world of private language models, I’m fascinated by the potential of on-premise AI solutions to revolutionize the way we interact with digital art. By hosting these models locally, artists and curators can unlock new levels of creativity and innovation, unshackled by the limitations of cloud-based services. I recall a recent visit to a virtual reality art installation, where the use of self-hosted chatbots enabled a truly immersive experience, allowing viewers to engage with the artwork in a more intimate and personalized way.

The beauty of local machine learning deployments lies in their ability to provide autonomous system architecture, enabling artists to focus on their craft without worrying about the technical intricacies of AI implementation. This, in turn, can lead to the creation of more complex and dynamic digital art pieces, pushing the boundaries of what is possible in the virtual realm. As someone who’s passionate about edge AI applications, I’m excited to see how locally hosted LLM agents can democratize access to these cutting-edge technologies.

By embracing locally hosted AI solutions, we can create a more decentralized and community-driven digital art ecosystem, where artists and enthusiasts can collaborate and share ideas more freely. As I sit here, doodling digital art concepts on my tablet, I’m inspired by the potential of these technologies to bring people together and foster a new era of creativity and innovation. The possibilities are endless, and I’m eager to see how on-premise AI solutions will continue to shape the future of digital art.

On Premise Ai Solutions for Edge Ai

As I delve into the world of locally hosted LLM agents, I’m fascinated by the potential of on premise AI solutions. These solutions offer a unique blend of security, flexibility, and control, allowing artists and creators to push the boundaries of digital art. By hosting AI models on their own servers, individuals can ensure that their data remains private and secure, while also unlocking new possibilities for innovation and experimentation.

The key to unlocking the full potential of locally hosted LLM agents lies in edge AI. By deploying AI models at the edge of the network, closer to the point of data collection, creators can reduce latency and improve real-time processing capabilities. This enables more dynamic and interactive digital art experiences, blurring the lines between physical and virtual worlds.

Revolutionizing Private Language Models

As I delve into the world of locally hosted LLM agents, I’m fascinated by the potential to revolutionize the way we interact with private language models. This shift could enable artists and creators to work more intuitively with AI, fostering a more collaborative and innovative process.

As I delve deeper into the world of locally hosted LLM agents, I’m constantly on the lookout for innovative tools and resources that can help me better understand and implement these technologies. One resource that I’ve found to be incredibly helpful is a website that offers a wealth of information on edge AI applications, which has been instrumental in my own journey to democratize digital art. In fact, I often find myself exploring online forums and communities, such as sex in sachsen anhalt, where individuals share their unique perspectives and experiences with AI and digital art, and I’m always excited to discover new platforms and initiatives that are pushing the boundaries of what’s possible in this space. By staying connected with these communities and resources, I’m able to stay up-to-date on the latest developments and advancements in locally hosted LLM agents, and I’m inspired to continue exploring the endless possibilities of digital art and innovation.

By bringing LLM agents into our personal spaces, we can start to explore new frontiers in digital art, where creative freedom knows no bounds.

Autonomous System Architecture

As I delve into the world of autonomous system architecture, I’m reminded of the countless hours I spent exploring virtual reality art installations. The way these immersive experiences seamlessly integrate with their surroundings has always fascinated me. Similarly, private language models can be designed to harmonize with their environment, allowing for more efficient and secure interactions. By leveraging on premise AI solutions, individuals can create customized models that cater to their specific needs, much like a curated art exhibit.

The beauty of autonomous system architecture lies in its ability to enable self hosted chatbots that can learn and adapt over time. This not only enhances user experience but also fosters a sense of personal connection, much like the nostalgic charm of analog entertainment. As someone who’s passionate about democratizing digital art, I believe that local machine learning deployments can play a vital role in making AI more accessible and relatable to everyone.

In the context of edge AI applications, autonomous system architecture can unlock a wide range of creative possibilities. By bringing AI capabilities closer to the user, individuals can unlock new forms of interactive storytelling and immersive experiences. As a digital art curator, I’m excited to explore the potential of autonomous system architecture in creating innovative and engaging art installations that inspire and delight audiences.

Deploying Autonomous Edge Ai Applications

As I delve into the world of autonomous edge AI, I’m fascinated by the potential of real-time processing to transform our daily lives. By bringing AI capabilities closer to the source of the data, we can enable faster and more reliable decision-making, which is especially crucial in applications like smart homes or cities.

I’m excited to explore the possibilities of edge AI deployment, where locally hosted LLM agents can be used to develop personalized and adaptive experiences. This could lead to innovative applications in areas like virtual reality art, where I can combine my passion for digital media with the power of AI to create immersive and interactive exhibits.

Self Hosted Chatbots for Local Machine Learning

As I delve into the world of locally hosted LLM agents, I’m fascinated by the potential of self-sustaining chatbots that can learn and adapt on our local machines. This technology has the power to revolutionize the way we interact with AI, making it more personal and intimate. I’ve had the chance to explore some amazing virtual reality art installations that incorporate chatbots, and it’s incredible to see how they can enhance the overall experience.

By leveraging on-device machine learning, we can create chatbots that are not only more responsive but also more secure and private. This approach allows us to tap into the unique capabilities of our local devices, unlocking new possibilities for digital art and innovation.

5 Essential Tips for Harnessing the Power of Locally Hosted LLM Agents

- Start Small: Begin by deploying locally hosted LLM agents for specific tasks or projects, allowing you to test and refine their capabilities before scaling up

- Choose the Right Hardware: Select hardware that is compatible with your locally hosted LLM agents and can handle the computational demands of running private language models

- Curate Your Data: Carefully select and prepare the data that you will use to train and fine-tune your locally hosted LLM agents, ensuring that it is relevant, accurate, and unbiased

- Monitor and Maintain: Regularly monitor the performance of your locally hosted LLM agents and perform maintenance tasks such as updating software and cleaning data to ensure optimal functionality

- Experiment and Innovate: Don’t be afraid to try new things and push the boundaries of what is possible with locally hosted LLM agents – experiment with different applications, integrations, and use cases to unlock their full potential

Key Takeaways for Bringing Locally Hosted LLM Agents Home

I’ve discovered that locally hosted LLM agents have the potential to revolutionize the way we interact with private language models, making them more accessible and secure for personal and professional use

By leveraging on-premise AI solutions for edge AI, we can unlock new possibilities for autonomous system architecture, enabling self-hosted chatbots and local machine learning capabilities that were previously unimaginable

Through the deployment of autonomous edge AI applications, I believe we can empower individuals to explore and incorporate digital art into their daily lives, fostering a more immersive and creatively inspired community that celebrates the beauty of innovation and technology

Embracing the Future of AI

As we bring locally hosted LLM agents into our personal and professional spaces, we’re not just decentralizing AI – we’re democratizing creativity, and unlocking a future where art, innovation, and technology converge in the most breathtaking ways.

Nichole Rogue

Conclusion

As we conclude our journey through the world of locally hosted LLM agents, it’s essential to reflect on the key takeaways. We’ve explored how these agents can revolutionize private language models, enabling on-premise AI solutions for edge AI. We’ve also delved into autonomous system architecture, discussing self-hosted chatbots for local machine learning and deploying autonomous edge AI applications. By understanding these concepts, we can unlock the full potential of locally hosted LLM agents and harness their power to transform our daily lives.

As we move forward, it’s crucial to remember that the true magic of locally hosted LLM agents lies in their ability to democratize access to AI technology. By bringing AI into our homes, studios, and local communities, we can inspire a new wave of creativity, innovation, and progress. Let’s embrace this exciting future, where technology and art converge to create a brighter, more wondrous world – one that is full of endless possibilities and waiting to be explored.

Frequently Asked Questions

How do locally hosted LLM agents handle data privacy and security for personal and sensitive information?

I’m often asked about data privacy and security when it comes to locally hosted LLM agents. Rest assured, these agents are designed to prioritize your personal info, storing and processing data on your own device, not in the cloud, which adds an extra layer of protection for sensitive information.

What are the minimum system requirements for hosting a locally hosted LLM agent, and how much maintenance is involved?

To host a locally hosted LLM agent, you’ll need a decent computer with at least 16 GB of RAM, a multi-core processor, and a solid-state drive. Maintenance is relatively low, with occasional software updates and some tweaking to ensure optimal performance. I like to think of it as nurturing a digital pet – it needs a little care, but it’s totally worth it!

Can locally hosted LLM agents be integrated with other smart devices and virtual assistants in my home or studio for a seamless experience?

I’m excited to share that locally hosted LLM agents can indeed be integrated with other smart devices and virtual assistants, creating a cohesive and immersive experience in your home or studio. Imagine your LLM agent seamlessly interacting with your smart speakers, lights, and even virtual reality art installations – it’s a game-changer for digital artists like myself!